All Activity

- Past hour

-

2026-2027 Super El Nino

snowman19 replied to Stormchaserchuck1's topic in Weather Forecasting and Discussion

100%. 1997-98 peaked the final week of November then steadily weakened right through the end of March. 2015 also peaked the last week of November then weakened throughout the entire winter. Twitter kept wishcasting that the weakening was going to somehow “save” that winter and it was going to turn into an arctic cold tundra with mountains of snow the rest of the way. It also didn’t help that JB was hyping nonstop that it was a super “migrating Modoki” El Niño and said the analogs were 1957-58, 1965-66, 1976-77, 1977-78, 2002-03, 2009-10 and 2014-15 for months on end in the fall and beginning of winter. The weenies bought right into it, hook, line and sinker -

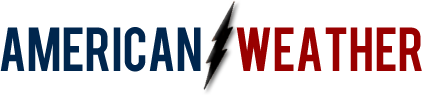

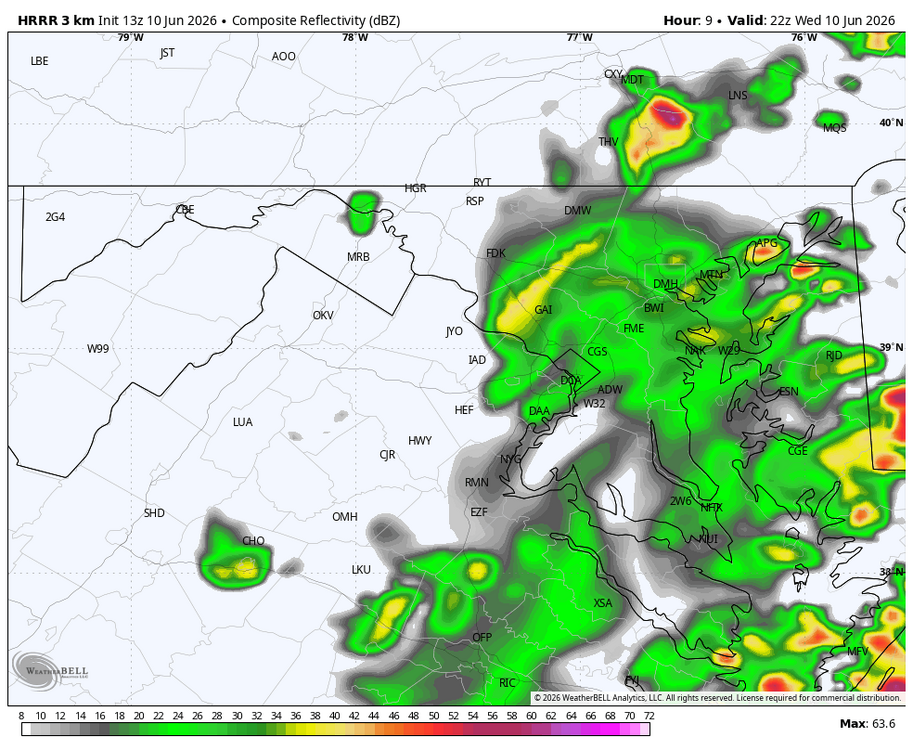

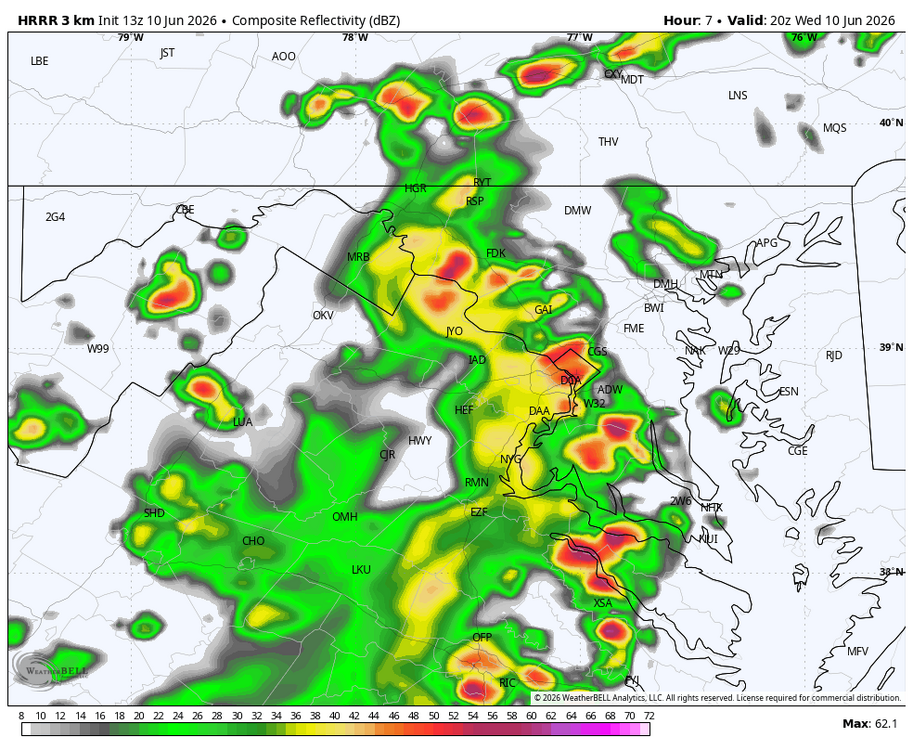

Tomorrows updated outlook from SPC comes out in a few hours, will see if they get a little more bullish. Day 2 Convective Outlook NWS Storm Prediction Center Norman OK 1245 AM CDT Wed Jun 10 2026 ...Mid-Atlantic into central Appalachians... Daytime heating coupled with dewpoints in the 60s to low 70s are expected to yield a weakly capped and moderate to strongly unstable afternoon air mass. Forcing for ascent associated with the disturbances mentioned in the synopsis are expected to foster multiple clusters of thunderstorms capable of damaging winds, especially if organized cold pools can develop. There is some model signal that a corridor of slightly stronger low-level and deep-layer shear will materialize across the lower Hudson Valley Thursday afternoon, which would support some potential for supercells capable of large hail and perhaps a brief tornado. ..Mead.. 06/10/2026

-

There are no flood watches out, yet, but the CAMs are all dumping several inches of rain across the se IA, n IL, ne MO area.

-

Managed a robust .6 out in the sticks before everything fizzled to my east

-

RIP Sycamore IL

-

That's probably a reference to the heat index.

-

79 / 66 DT are coming up. Dealing with clouds and scattered storms/ showers today otherswise warm/muggy low - mid 80s. Hazy Hot Humid Thu - Sun with storms chances focused on Thu pm - Friday but chances for isolated storms each of those days. It'll be interesting to see how much rain can fall fro these storms in the 4 day period thu - sunday. Moderate Mon - Tue before trough into the Midwest / Northeast 6/17 - 6/21. Beyond there warmer - we'll see how much heat can build in the period ahead of next month.

-

About 0.13” for the morning round. Let’s go hrrrr!

-

Short range models seem to be playing catchup with the potential intensity/development this afternoon....it will be interesting to see what happens.

-

-

Looks like 3 days of severe potential starting tomorrow

-

Raining going poof here as it moves East.

-

@Ian was always overrated - meh. All kidding - missed you, dude!

-

Does it take it all the way East, even if it were to happen?

-

J.Mike started following Capital Weather independent of the Post

-

I joined up. Love the great email summary I get every day. Good luck to all.

-

Ok gents, keep me up to date with what’s happening locally, and I’ll keep you guys up to date on what’s happening in St Thomas.

-

-

Yes, the lack of humidity has been noticeable thus far. Desert life was a foreign concept before this summer

-

Also we are getting Dewpoints in the mid 60s instead of low 70s, so it's kinda a wash from a heat index standpoint

-

The extreme dry soils in parts of NC are favoring that highs there will be a little hotter and threaten records vs most other areas of the SE. It will be interesting to see what actually happens. Much will depend on the pattern of mid afternoon pop-up thunderstorm activity and how far reaching are the associated outflow boundaries. OTOH, the ATL area, where it has been raining a good bit more, isn’t favored to threaten record highs. Their forecasts have mainly lower 90s.

-

2026-2027 Super El Nino

40/70 Benchmark replied to Stormchaserchuck1's topic in Weather Forecasting and Discussion

See, I would term that "what other hemispheric influences are competing to alter it and how".....ie, while some are accentuated, other features are blunted. That is the essence of a lagging RONI value...whereas MEI/ONI are more likely to just be universally weaker and thus more prone to polar influences. - Today

-

(002).thumb.png.6e3d9d46bca5fe41aab7a74871dd8af8.png)

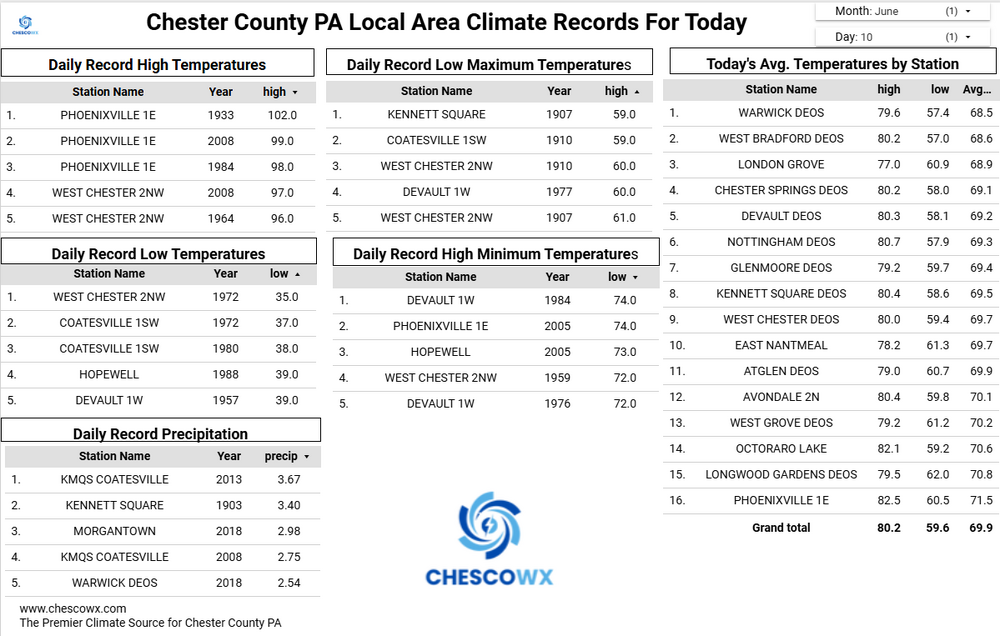

Central PA Summer 2026 Discussion/Obs Thread

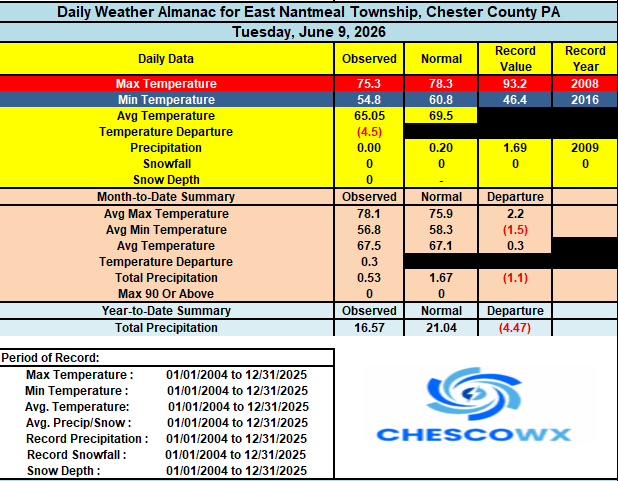

ChescoWx replied to Voyager's topic in Upstate New York/Pennsylvania

We are only running around 80% of normal rainfall for the year to date here in East Nantmeal. It looks like we should see at least some showers and thunderstorms today that could give us some welcome rain. A heat advisory goes into effect tomorrow with highs both Thursday and Friday in the low 90's with increasing humidity. Shower chances will be around both later in the day Thursday and Friday. The weekend looks cooler but still warm before we turn much cooler to start the new work week. Temperatures both Monday and Tuesday may only be in the 70's for high temperatures. -

(002).thumb.png.6e3d9d46bca5fe41aab7a74871dd8af8.png)

E PA/NJ/DE Spring 2026 Obs/Discussion

ChescoWx replied to PhiEaglesfan712's topic in Philadelphia Region

We are only running around 80% of normal rainfall for the year to date here in East Nantmeal. It looks like we should see at least some showers and thunderstorms today that could give us some welcome rain. A heat advisory goes into effect tomorrow with highs both Thursday and Friday in the low 90's with increasing humidity. Shower chances will be around both later in the day Thursday and Friday. The weekend looks cooler but still warm before we turn much cooler to start the new work week. Temperatures both Monday and Tuesday may only be in the 70's for high temperatures. -

I give this post a 60% chance to be successful with a 20% chance for boom and 20% chance to bust. with love, formerly Leesburg 04

-

80 at 9:35 "10 after 10" 'll be a interesting test today. We may be 82 or 83 at this rate by the top of the hour, which if that old adage bears any usefulness ...sends us about 7 deg above MOS' around the BDL-FIT-ASH-MHT horn. Although it's probably only 76 at BDL at 10 ... 10 after 10 isn't precise either.