-

Posts

566 -

Joined

-

Last visited

Content Type

Profiles

Blogs

Forums

American Weather

Media Demo

Store

Gallery

Everything posted by MegaMike

-

First Legit Storm Potential of the Season Upon Us

MegaMike replied to 40/70 Benchmark's topic in New England

In my opinion, there's too much trust in AI for weather prediction. I've mentioned this a few times, but It was made operational recently There's nothing wrong with current NWP excluding (a) it takes longer to run and (b) it requires a lot of resources vs. AI There is no significant evidence the EC-AIFS/AIGFS outperforms NWP for sensible weather at the surface during inclement weather (please provide a source if I'm wrong). For AI, nobody knows how forcing(x,y,z,t) is calculated (doesn't rely on traditional methods). ie... what is 1+1? Human = 1 + 1 == 2 ||| AI = :performs multi-dimensional math on 'n' fields: == 2. Do you trust that? Given the initial state of the atmosphere is captured flawlessly, there's no guarantee AI will perform well. AI is great when there is no known relationship/correlation between a predictor and many predictands. Weather is relatively predictable so I don't find AI useful unless the fields are bias-corrected then ingested back into data assimilation grids. If the AIs outperform NWP for this, *** and it evaluates well ***, I'll take it a little more seriously. Who knows... Maybe truncating/rendering certain fields may increase its accuracy for this one event <AND/OR> data assimilation is poor at the current location(s) where the disturbance(s) is/are, and AI could use historic events to predict this event with some level of accuracy. -

First Legit Storm Potential of the Season Upon Us

MegaMike replied to 40/70 Benchmark's topic in New England

Good observation. For the ECMWF/ECMWF-AI, the operational ECMWF has a horizontal resolution of ~9km. In comparison, the ECMWF-AI is trained on the ERA5 reanalysis dataset which has a resolution of ~31km (its operational product must be run on the same grid specifications). On top of resolution discrepancies, to my understanding, the ECMWF-AI uses its own, statistical relationship (AI and not traditional microphysical/physical schemes) to determine precipitation too. That likely compounds the resolution issue you mentioned, as well. I'm heavily leaning away from AI models for this event (and likely, for all events until it proves its accuracy for sensible weather). -

First Legit Storm Potential of the Season Upon Us

MegaMike replied to 40/70 Benchmark's topic in New England

Before 12z gets roll'n, here's what the 06z ensembles depict for QPF-mean and SFC MSLP: Will this trend into something like yesterday's 12z GFS? Probably not (as other mets mentioned), but at least the 0.5" QPF-mean contour is nearby (~Cape Cod; trying to be optimistic for the pessimistic weenies) for each ensemble. Given there's still a decent amount of spread at 96hrs, a lighter/moderate event may still be possible for eastern areas. I would like to see the EPS tick west at 12z, otherwise, I'd lower expectations if you haven't already. -

First Winter Storm to kickoff 2025-26 Winter season

MegaMike replied to Baroclinic Zone's topic in New England

I completely agree. It was recently made operational (Feb. 2025) and its primary purpose (imo at the current moment) is to provide an efficient (few resources and fast to simulate), medium/long range ensemble... I consider it a less accurate version of the CFS, honestly. They're years, if not decades, away from making the AIFS comparable to any traditional NWP modeling system. I'm not even fully sold on that being a possibility either... I'll take it seriously when the AIFS outperforms the IFS at the surface and not 500-50mb Vendors will provide any modeling system to stand out, unfortunately... At this range, I'd primarily consider the ECMWF, GFS, CMC, ICON, and UKMET (with more emphasis on their ensembles). Maybe look at trends of the AIFS for S&Gs. -

I respect the effort. It takes a long time doing an analysis on one storm. You did it for 200+ events and manually conducted/plotted an interpolation. That's wild.

-

November 2025 general discussions and probable topic derailings ...

MegaMike replied to Typhoon Tip's topic in New England

Pretty cool looking! Consensus is, that's the exhaust plume from the European Space Agency's Ariane 6 rocket (launched at Kourou, French Guiana). -

Absolutely not. Maybe it performed well for this one event, but that doesn't mean it's better than traditional NWP. You really need to conduct a thorough evaluation at the surface and aloft (for forcing variables) to make such conclusions. As an example, it's possible something can be right for the wrong reason. You wouldn't know unless you evaluated it... So, if AI did well with forcing, wrt NWP, over a duration of 1 year, then you can entertain the idea. This is just imo, but we're years, if not decades, away from this. We likely need to significantly improve data assimilation for this to occur.

-

-

July 2025 Obs/Disco ... possible historic month for heat

MegaMike replied to Typhoon Tip's topic in New England

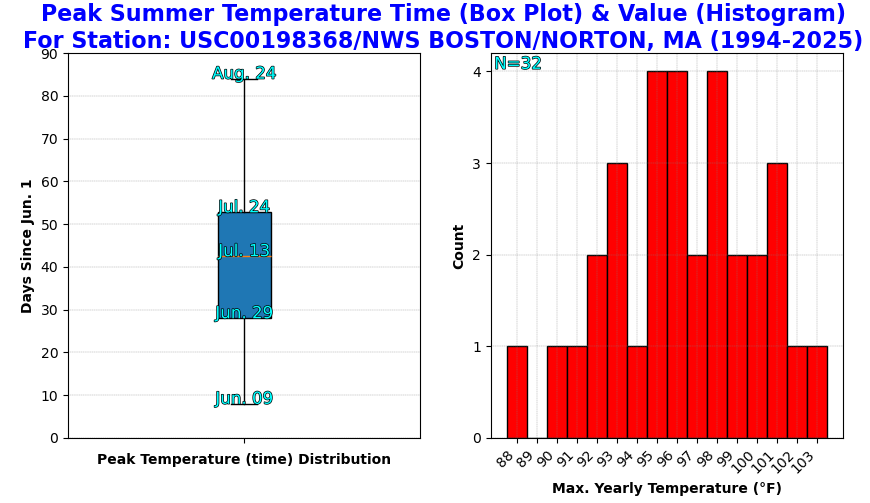

More! (Tip's writing ability) x (Wiz's excitement over New England, severe weather) I'm not a fan of heat, so I ran script to figure out the median date of the max. (Summer) daily temperature via GHCND .csv files (TMAX field). Based on what I ran (32 different records/1 per-year from 1994-2025), the median date is ~Jul. 13th for KBOX (labeled, 'NWS BOSTON/NORTON' at https://www.ncei.noaa.gov/pub/data/ghcn/daily/ghcnd-stations.txt). After July 24th, there's a good chance (75%) KBOX experienced their warmest day of the year. Just for S&Gs. -

July 2025 Obs/Disco ... possible historic month for heat

MegaMike replied to Typhoon Tip's topic in New England

Definitely! If CM1 missed this one (Reno), it likely can't resolve tornadoes unless (maybe) you beef up the model specs. The amount of resources to even run that simulation still gets me... A quarter of a trillion grid points, for a 42 minute simulation (time steps = 0.2s), that spans an area of ~5,600 miles^2 (~6x size of RI), and it took their cluster 3 days to run. That's crazy. Imagine running that for the entire U.S.? -

July 2025 Obs/Disco ... possible historic month for heat

MegaMike replied to Typhoon Tip's topic in New England

Right? Super cool! I believe they used VAPOR to create most/all of their graphics. Agreed in that I doubt they'll be able to replicate their success for most other tornadoes -

July 2025 Obs/Disco ... possible historic month for heat

MegaMike replied to Typhoon Tip's topic in New England

My advisor would always tell me that! Someone did manage to simulate a tornado (EF5 in El Reno 2011) using a modeling system intended for very fine atmospheric phenomena (CM1): https://www.mdpi.com/2073-4433/10/10/578 If you wanted, had the resources (19,600 nodes -> 672,200 cores & 270 TB worth of space), and had a lot of time, you can run the simulation too! In serial mode (single CPU), it'd take decades for this simulation to complete. Really, we have the modeling systems to run highly accurate simulations, but unfortunately, data assimilation and (relatively) limited resources is inhibiting us. A nice video of the results: -

July 2025 Obs/Disco ... possible historic month for heat

MegaMike replied to Typhoon Tip's topic in New England

Logically, it doesn't make sense to me: Let's bring in data scientists to create a stand alone, meteorological modeling system lol. I'm sure it'll get better (build dat' training dataset), but for now, I'd say they're 1-2 decades away from making anything comparable to traditional NWP. I still think using AI to bias correct ic/bcs is the way to go. I know that has merit. Yea, it's a bit misleading... They used HRRR analysis as ground truth to make the conclusion that 'HRRR-Cast is comparable to HRRR...' I'd still rather see evaluations/comparisons at METAR/radiosonde sites. -

July 2025 Obs/Disco ... possible historic month for heat

MegaMike replied to Typhoon Tip's topic in New England

New model development from NOAA: The Global Systems Laboratory is set to release an experimental, high resolution AI product called, 'HRRR-Cast.' It's been trained on 3 years worth of HRRR analysis data... Lately, computational efficiency is starting to dominate the modeling world and I'm incredibly skeptical of it. According to https://arxiv.org/html/2507.05658v1 (assuming this is the same modeling system): "HRRRCast outperforms HRRR at the 20 dBZ threshold across all lead times, and achieves comparable performance at 30 dBZ." "HRRRCast can produce ensembles that quantify forecast uncertainty while remaining far more computationally efficient than traditional physics-based ensembles." Big caveat: The study used HRRR analysis data as 'ground truth.' Therefore, it's no surprise that HRRR-Cast, and their other AI model, performed well compared to diagnostic HRRR/forecast output. Until I see AI outperform conventional NWP at METAR/radiosonde sites (surface and aloft), I'm not going to get excited. -

Embrace the NAM while you can, weenies. Change is coming.

-

It's an experimental model that doesn't predict moisture/heat flux based on fluid dynamics. As a result, it's totally unreliable in my opinion... It's probably the reason why the NWS doesn't refer to it in their forecast discussions. Can't wait to see how it performs during the warm season. With limited training data on hurricanes, I expect it to perform terribly with tropical disturbances.

-

I agree with you both. To evaluate snowfall, you really need to evaluate SWE, as well. For that matter, you'd need to evaluate forcing fields too (ensure SWE was predicted accurately for the right reasons). If SWE was under predicted, but a snowfall algorithm performed well, that algorithm isn't showing accuracy... It's showing a bias. Unfortunately, snowfall evaluations are tricky because of gauge losses wrt observations. Not everyone measures the same either... Can of warms, snowfall is. A met mentioned this earlier too, but the more dynamic an algorithm is, the more likely errors exacerbate. The Cobb algorithm is logically ideal for snowfall prediction, but compounding error throughout all vertical layers of atmosphere likely inhibits its accuracy. Snowfall prediction sucks which is probably why there are only a handful of publications. Otherwise, these vague algorithms wouldn't be widely used by public vendors.... It's the bottom of a very small barrel.

-

I've never heard of Spire Weather before, but based on their website, it looks like it's another AI modeling system (someone correct me if I'm wrong). It doesn't take much to run an AI model... Especially since the source code for panguweather, fourcastnet, and graphcast are available online for free: https://github.com/ecmwf-lab/ai-models My recommendation: If they don't evaluate or provide modeling specifications, don't use it.

-

I'm not sure if you're being sarcastic, but please don't use the CFS for this. The CFS is a heavily truncated (low horizontal, vertical, and temporal resolution) modeling system solely intended for climate forecasting. It won't perform well with a dynamic beast (which may or may not occur).

-

I have a hard time trusting the NAM 3km due to an issue (possibly patched or related to a vendor?) caused by its domain configuration and dynamics/physics options... If I remember correctly, if a large-scale disturbance moved too quickly, a subroutine will sporadically calculate an unrealistic wind speed (only aloft) at certain sigma levels... Can't predict atmospheric flux if a forcing field is kaput. I need to find this case study... It's pretty interesting and it happened twice from ~2016-2020. The erratic nature of NAM is off-putting too, but I'm thinking that's related to its ic/bcs... Before a system materializes, you're solely relying on ic/bcs from a regional modeling system. Those regional modeling systems cannot effectively predict, or even initialize, small-scale/convective features which leads to significant error over time. The same forecast volatility will likely occur to other mesoscale modeling systems if they ran past 48 hours. While on topic: I would like to see vendors start using the RRFS (https://rapidrefresh.noaa.gov/RRFS/; currently under development)... especially for snowfall and precipitation forecasting. It's a unified, high resolution modeling system which runs every hour. Consider it like the GEFS, but with the HRRR. The NBM/HREF are great, but its a waste of resources to post-process different mesoscale modeling systems onto a constant grid. Let's just be happy nobody mentioned the NOGAPS. Also not a fan of the ECMWF AI... That model is for data scientists, not meteorologists.

-

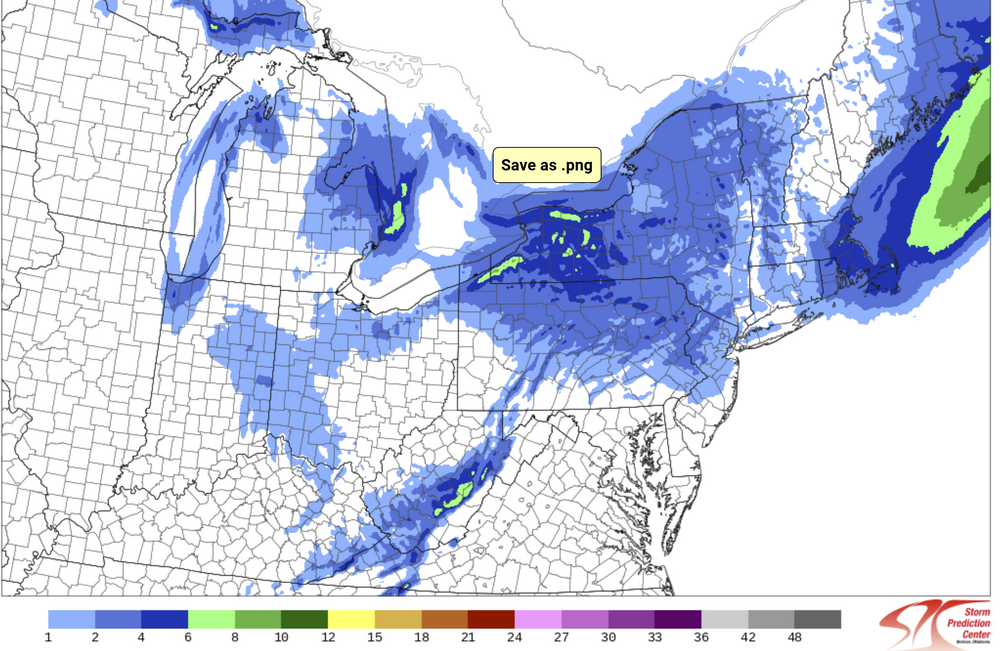

At this time, nobody should be relying too heavily on global (GFS, CMC, ECMWF) or regional (RGEM 10km, RAP 13km, NAM 12km, etc...) modeling systems. Stick with the mesos (HRRR 3km, WRF ARW, WRF ARW2, NAM 3km, etc...) and rely on data assimilation/nowcasting to follow any trends. That said, the 12z HREF (ensemble of mesos) increased mean snowfall for most areas in eastern MA and all of RI (compared to last night at 00z). It also expands accumulating snowfall westward.... More snow may be falling after this, but I'm too impatient to wait for the next panel... Follow it here: https://www.spc.noaa.gov/exper/href/?model=href&product=snowfall_024h_mean§or=ne&rd=20241220&rt=1200

-

December 2024 - Best look to an early December pattern in many a year!

MegaMike replied to FXWX's topic in New England

Good eye/question! The most recent version of the GFS has 127 hybrid sigma-pressure (terrain following at the surface and pressure aloft) vertical profile layers. In the plot you attached, the vendor is/appears to be plotting vertical profile data that has been interpolated to mandatory isobaric surfaces (1000, 925, 850, 700, 500, 400, 300, 250, 200, 150, and 100 mb). I'm assuming the same vendor uses a diagnostic categorical precipitation type/intensity field to plot precipitation type/intensity, which considers all 127 native vertical profile layers. So the Skew-T is only showing you a select few isobaric surfaces although the native model output possesses plenty more. -

I published a paper for the EPA regarding an air quality modeling system/data truncation, but I haven't collaborated with NCEP on atmospheric models/simulations. I do have experience with a bunch of different modeling systems though (in order): ADCIRC (storm surge), SWAN (wave height), WRF-ARW (atmospheric and air quality), UPP (atmospheric post-processor), CMAQ (air quality), ISAM (air quality partitioning), RAMS ( atmospheric), ICLAMS (legacy atmospheric model).