-

Posts

566 -

Joined

-

Last visited

Content Type

Profiles

Blogs

Forums

American Weather

Media Demo

Store

Gallery

Everything posted by MegaMike

-

It's hard to dispute the verification webpage. I guess it's possible results are skewed in favor of fair weather, but I agree with what Tip wrote a few days ago: I think most members focus on single events and get too emotionally biased. Anywho, as INS mentioned, the 12z EPS is a step back when looking at the mean...

-

Generally, the ECMWF outperforms the GFS, CMC, JMA, and UKMET for every variable (gph, wind, temperature, etc...), isobaric surface, and for all spatial (CONUS, N-Hem, etc...) and temporal (fcst hr 0-240) stratification over the past 31 days. I would say, the UKMET is a close 2nd place though (at least, for gph). It even slightly outperforms the ECMWF from ~122-140hr. For visualization (https://www.emc.ncep.noaa.gov/users/verification/global/gfs/prod/atmos/grid2obs/hgt/): Regardless, I'm still placing more weight on ensembles. If the EPS improves/holds steady, I'll still follow the event.

-

How bout' an EPS trend gif before its 12z cycle? Definite improvement on the mean since yesterday's 18z run. I wonder if models over corrected last night given some sort of data assimilation feedback... We'll see!

-

GEFS mean is ~50-100 mile improvement. If the ECMWF + EPS look better at 12z, I'll feel more enthusiastic about this event.nt.

-

Possible coastal storm centered on Feb 1 2026.

MegaMike replied to Typhoon Tip's topic in New England

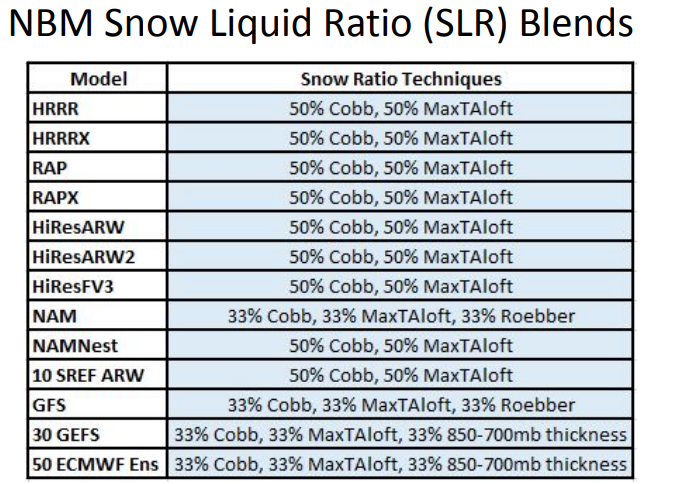

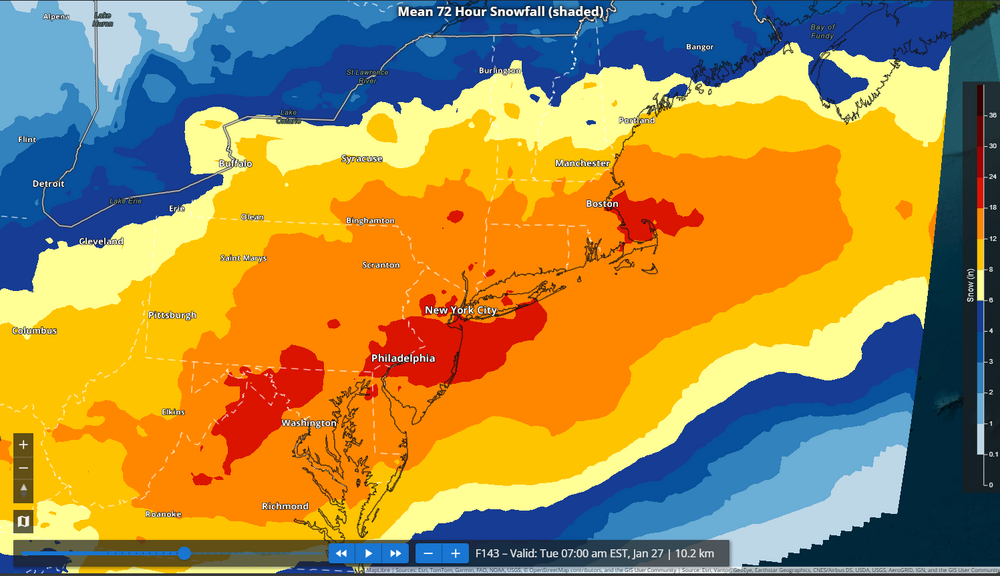

Go to this link, https://vlab.noaa.gov/web/mdl/nbm-weather-elements then click the parameter you're curious about. If the field has a 'CONUS' option, click it. You'll get a table that specifies model weights. Despite the description, I still can't make sense of the NBM snowfall maps that correspond to their model weights. Something else seems missing... The map seems too high and I don't see much that supports those values b/n the SREFs, EPS, GEFS, and GFS. -

It's frustrating, for sure. I think to be 100% satisfied with their documentation, they need to provide an example for one case study. ie... Provide a table of snowfall accumulations (and exactly what field/how they processed snowfall) for all ensemble members + diagnostic models, take the weighted sum of all ensembles/models, then show the final result superimposed onto their NBM map. When I ran the calculations as you did, my value was far off from what the map showed too. I do trust that their documentation is correct (consisting models and weights wrt time), but something else does seem missing. I may ask the developers when I have time, but I think Don already did this.

-

From hour 61-84 the NBM snowfall (it's different per variable) product composes of the GEFS (30 members; weighted 24.75%), EPS (50 members; 41.25%), GFS (weighted 4%), and SREF (10 members; weighted 30%). Likely, either the SREFs, and or EPS, beefed up snowfall a little bit... The GEFS/GFS still look poor.

-

Excellent! Now try running these modeling systems (similar to the AIGFS and EC-AIFS). They'll perform better and you'll get a good challenge out of it https://github.com/google-deepmind/graphcast

-

Based on the link I sent, let's see if this checks out... Both the EPS and GEFS have the same weights. 1.2% for each individual member (1.2%*[50+30]=96%)... The GFS has a weight of 4%, so the ensemble snowfall contribution would be, 9 = 8 GEFS + 1 EPS SF_ens = 9 * 0.012 * 6" + 1 * 0.012 * 16" SF_ens ~ 1" NBM snowfall is SF_ens + 0.04*SF_GFS. I don't know what the GFS had for the city at 12z, but it could be calculated; NBM = 5.9" based on the map 5.9" = 1" + 0.04 * SF_GFS SF_GFS = 4.9"/0.04 = a very unrealistic answer... So something is off. Do you have the 6z totals? Mathematically, that's how the NBM (imo) calculates its mean.

-

Oh, the irony! I just commented about this in a different thread. At hour 84+, for snowfall specifically, the NBM uses the EPS (50 members), GEFS (30 members), and the GFS (https://vlab.noaa.gov/documents/6609493/32850490/CONUS_SNOICEACCUM.pdf)... So there must be some GEFS/EPS members that still support a (significant) snow event. You'd have to look at the individual members themselves to determine how (are there a few members skewing the mean, are there two different 'camps', etc..) that map you posted above is produced. I thought it was odd too. For skewness, you could also look at quartile ranges provided at https://sites.gsl.noaa.gov/desi/?x4dLocations=["City"]&chart=x4d&lat=40&lon=-105&theme=dark&timeZone=local&hourFormat=12&x4dviewState={"latitude"%3A40.5%2C"longitude"%3A-100%2C"bearing"%3A0%2C"pitch"%3A0%2C"zoom"%3A4}&dset=HREF-CONUS&clusHghlgt=true&x4dMapStyle=3D&x4dMaps={"basemap"%3A{"value"%3A"Mapbox"}%2C"mapboxBasemap"%3A{"value"%3A"Satellite"%2C"parentValue"%3A"mapboxBasemap"}%2C"Airports"%3A{}%2C"ARTCC"%3A{}%2C"Cities"%3A{"checked"%3Atrue}%2C"Coastlines"%3A{"checked"%3Atrue}%2C"County+lines"%3A{}%2C"Countries"%3A{}%2C"Country+lines"%3A{}%2C"Countries+NonUS"%3A{}%2C"Country+NonUS+lines"%3A{}%2C"CWAs"%3A{}%2C"Graticules"%3A{}%2C"HUC+6+(CONUS+%26+OCONUS)"%3A{}%2C"HUC+8+(CONUS)"%3A{}%2C"PSAs"%3A{}%2C"RFCs"%3A{}%2C"Roads"%3A{}%2C"State+lines"%3A{}%2C"Tribal+Lands"%3A{}%2C"Vulnerability"%3A{"value"%3A"Social+Vulnerability+Index"%2C"parentKey"%3A"Vulnerability"%2C"parentValue"%3A"Vulnerability"}%2C"Watches%2FWarnings%2FAdvisories"%3A{}%2C"Zones+(public)"%3A{}%2C"Zones+(fire+wx)"%3A{}%2C"Zones+(coastal+marine)"%3A{}%2C"Zones+(offshore+marine)"%3A{}}&preferredFontSize=14&x4dDset={"renderOptions"%3A"t2"%2C"plotargs"%3A[{"fields"%3A["t2"]%2C"fieldOption"%3A"statisticalMeasures"%2C"trackingID"%3A"tracking_643d2e8c-941e-4f4a-99f4-e0f3eeab9bd4"%2C"layerorder"%3A1769554913471}]%2C"name"%3A"statistics"%2C"default"%3Atrue}&x1dGroup=Default&x1dSection=overview&x1dSingleField=t2&x1dGraphStyle=pdf&x2dGraphStyle=boxwhisker

-

Possible coastal storm centered on Feb 1 2026.

MegaMike replied to Typhoon Tip's topic in New England

The 13z NBM has a 12-18" mean contour for SE MA (looks similar to the map DIT posted). According to dat' link (https://vlab.noaa.gov/documents/6609493/32850490/CONUS_SNOICEACCUM.pdf), snowfall values at 84hr+ are calculated from 30 GEFS members, 50 ECMWF members, and the GFS. Needless to say, some individual members still support this event. 12z GEFS fcst hr 138: 12z EPS fcst hr 138 (last available hour at the moment): -

Snow up to thy' neck... The trooper is 24" tall and snow is ~2/3rds to the top of the figurine. I'd call it ~13-16" (removing some inches that drifted from the roof). Just shoveled the driveway and measured just under 11" on my car. Getting a fine snow that steadily accumulates. Spent about 45mins shoveling and picked up a half inch. Good day overall and it's still snowing!

-

Ignore it for the coastline. Snow depth is a diagnostic product from each modeling system. It's masked for bodies of water... If you interpolate snow depth from a body of water (always 0") to a place over land (>>>0", in this case), it'll always have a tight gradient for coastal locations.

-

I think @EastonSN+ is asking for someone to post it. Generally, a little less QPF for all locations... I wouldn't overanalyze it though. It's about time to focus on regional/meso-scale models.

-

It uses several different algorithms + a bunch of different modeling systems < https://vlab.noaa.gov/documents/6609493/6665561/NBM_v4.2_Eval_SlideDeck.pdf >. Definitely an improvement over the traditional, 10:1 methodology.

-

It's a great website and there's a lot provided! It's free and the HREF (and others) is available too. For the weenies: https://sites.gsl.noaa.gov/desi/?x4dLocations=%5B%22City%22%5D&chart=x4d&lat=40&lon=-1[…]1dSingleField=t2&x1dGraphStyle=pdf&x2dGraphStyle=boxwhisker For now, I'd stick with BUFKIT although the NBM does incorporate the Cobb snowfall algorithm. For S&Gs, the 13z NBM has ratios ~12-20:1 throughout the event. It's a bit early for this (and snowfall maps), but man... I miss following potential snow events.

-

-

January 2026 regional war/obs/disco thread

MegaMike replied to Baroclinic Zone's topic in New England

-

January 2026 regional war/obs/disco thread

MegaMike replied to Baroclinic Zone's topic in New England

I can't find anything operational for the Clankers (AIGFS, etc...), but here's RMSE (x) versus isobaric surface (y) for some of the major diagnostic modeling systems at forecast hour 120 (day 5), for the northern hemisphere, and for the past 31 days: The smaller the RMSE value, the better the results... So, if I had to rank them as an aggregate of all isobaric surfaces, it'd go; 1) ECMWF 2) UKMET~=CMC [pretty close] 3) GFS 4) JMA 5) FNMOC 6) CFS. Let's remember weenies, this is for one forecast hour, for the northern hemisphere, and for the past 31 days. Accuracy changes when you adjust any of those parameters. That said, dabble with that if you'd like > https://www.emc.ncep.noaa.gov/users/verification/global/gfs/prod/atmos/grid2obs/hgt/ - it takes a while for the website to load on Chrome, unfortunately. -

First Legit Storm Potential of the Season Upon Us

MegaMike replied to 40/70 Benchmark's topic in New England

-

First Legit Storm Potential of the Season Upon Us

MegaMike replied to 40/70 Benchmark's topic in New England

-

First Legit Storm Potential of the Season Upon Us

MegaMike replied to 40/70 Benchmark's topic in New England

I never imagined this forum diverge into a discussion about AI Admittedly, your last sentence is difficult to accept. I'm sure most of us feel the same too. To add to the discussion, here's the 12z ensemble spread and diagnostic prate/type at 90hr... Still uncertainty amongst the ensembles/models so we're not entirely dead yet. If I had to stratify it as is (for measurable precipitation), I'd call it the AIs+ICON+CMC vs. GFS+ECMWF+UKMET. -

First Legit Storm Potential of the Season Upon Us

MegaMike replied to 40/70 Benchmark's topic in New England

That's a fair point. I didn't want to get technical, but I'll restate it as, "the ceiling for AI should be that of current NWP + bias correction." I've mentioned in the past that AI should be used to bias-correct ic/bcs, so I don't disagree. On top of bias-correction, I imagine the analysis datasets already incorporate 'nudging.' This is only done for the ic/bcs prior to initialization though, so you'd still need to do gridded bias correction post-simulation. -

First Legit Storm Potential of the Season Upon Us

MegaMike replied to 40/70 Benchmark's topic in New England

Thanks, dude. I always think of Ian Malcolm's quote from Jurassic Park when AI models are mentioned: Data scientists are so "preoccupied with whether or not they could, that they didn't stop to think if they should." I think they're more useful for climatological/ensemble purposes. Its resolution is too course for nowcasting, and whether people like it or not, the best real-time product we have is the HRRR (only model to update every hour not considering the RRFS). Users just need to understand its limitations... Within a few hours = good ||| outside a few hours = meh ||| beyond a PBL cycle = ignore... I've been thinking; theoretically, the ceiling for AI should be that of current NWP... I don't think it's possible to outperform the dataset its trained on, so to improve AI, you must improve NWP <OR> increase the size of your training dataset. As a result, NWP will never be phased out. :fist bump: If I remember correctly, the evaluation was conducted wrt an analysis dataset (not in-situ locations). To me, that implies they're evaluating its efficacy (can it 'hang' with a traditional modeling system?) and not its accuracy. I did this too when I compressed assimilation data and reran CMAQ simulations when I worked with the EPA. I won't trust AI until evaluations are conducted at remote sensing stations. Analysis datasets aren't entirely accurate.