April Forecast Verification for Inland Connecticut

I've been creating daily 6-day forecasts for the better part of this year, with a focus on inland Connecticut. Before making any forecast, I take a close look at the computer model forecasts through Day 6, including a few forecast techniques to see how verification pans out. In April, I had 29 days worth of data, out of a possible 30, to measure forecast accuracy.

I've been creating daily 6-day forecasts for the better part of this year, with a focus on inland Connecticut. Before making any forecast, I take a close look at the computer model forecasts through Day 6, including a few forecast techniques to see how verification pans out. In April, I had 29 days worth of data, out of a possible 30, to measure forecast accuracy.

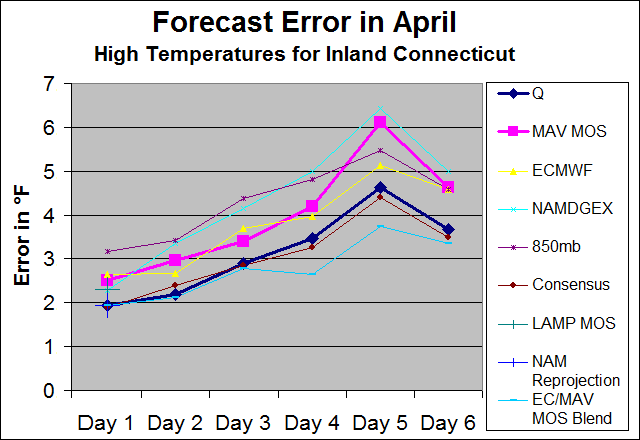

As expected, forecast error generally increases with time. It is interesting to note a spike at Day 5 and a decrease at Day 6. That goes back to two particular days that had poor Day 5 forecasts vs. actual temperatures. The spread is relatively uniform as well. The error with the NAM model does seem to increase faster with time than the others, which is not a surprise. DGEX data was used for Days 5 and 6.

With respect to my own forecasts, I measure verification as a mean of inland temperatures across the state. When I look at the computer models, I choose Meriden (KMMK) as a central point. This is due to its location near the center of the state. With that said, since my own verification is slightly different than the control (KMMK), this may skew results slightly. For that reason, I will be creating 6-day forecasts specifically for Meriden as a go-forward.

The Euro and MAV MOS rank fairly close, but it is very interesting to note that the negative (cold) bias the Euro has is almost a mirror reflection of the MAV MOS positive (warm) bias:

The MAV MOS appears to correct some of its bias towards Days 5 and 6. That can perhaps be partially explained by the fact that MOS is skewed towards climatological temperatures. The NAM also seems to have somewhat of a cool bias. I re-project highs from the NAM for Day 1, but that re-projection seems to over compensate the bias, at least in the case of April.

Explaining the models/forecasts...

Q: My forecast high temperatures for inland Connecticut. (mean of inland stations)

MAV MOS: Forecast high temperatures for KMMK. (06z model run)

ECMWF: Forecast grid-point high temperatures for KMMK. (00z model run)

NAMDGEX: Approximate high temperatures for KMMK. These values are interpolated off of a graphical forecast, so the numbers are estimated. I use the NAM for Days 1-4 and the DGEX for Days 5 and 6. (06z model runs)

850mb: An 850mb forecast technique that I have been working on for quite some time. Because this technique is based off of Danbury (KDXR), that station is used for verification.

LAMP MOS: Forecast high temperatures for KMMK. (most recent run in morning)

NAM Re-projection: This takes into account the actual 9 a.m. temperature vs. the 06z forecast for 9 a.m. for KMMK. That error is then re-projected into the high temperature forecast. Example: If the 9 a.m. temperature was 2°F warmer than forecast, then 2°F is added to the high temperature forecast.

Consensus: A mean of each forecast above, including my previous forecast (continuity)

How accurate was a Euro/MAV MOS blend? Well, not only do the opposing biases balance out close to zero, but the overall forecast error was less than any other forecast technique for Days 2-6:

It's pretty interesting to see the results. It goes beyond comparing computer model verification. In order to become a better forecaster, I want to see what forecasts have worked out, which ones haven't and if I have any biases. This is only one month's worth of data, so more will need to be compiled over the long-run to see how models perform. I also expect that different models/techniques will perform differently depending on the season, weather pattern, etc.

0 Comments

Recommended Comments

There are no comments to display.

Create an account or sign in to comment

You need to be a member in order to leave a comment

Create an account

Sign up for a new account in our community. It's easy!

Register a new accountSign in

Already have an account? Sign in here.

Sign In Now